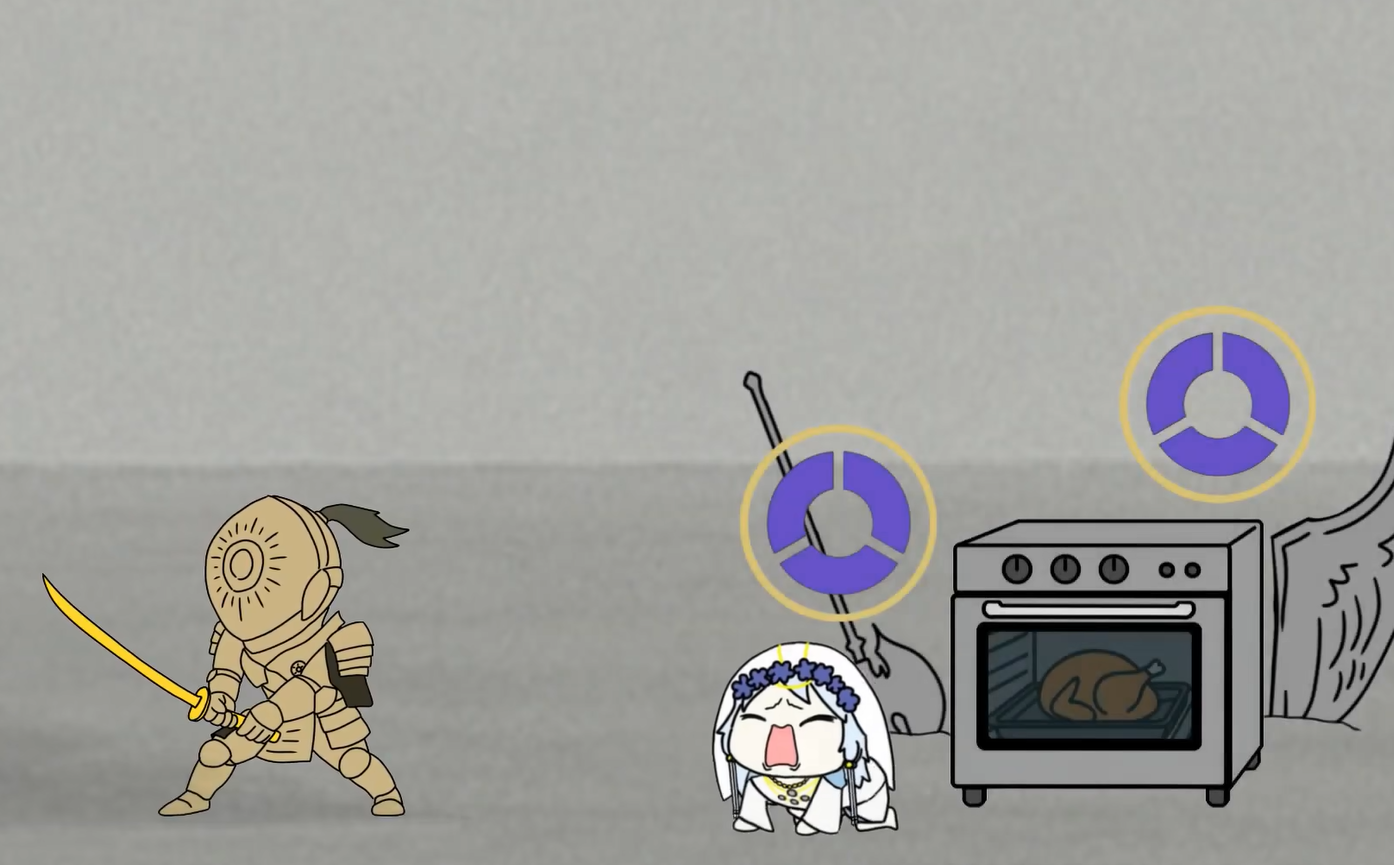

Play 'Ugh~' Sound While Playing Nightregin by Using Discord Bot

We know that Discord has a sound board, which allows you to play various weird sounds in game. But sadly it doesn’t work in full screen game like ELDEN RING:NIGHTREGIN. I used to voice chat with m...